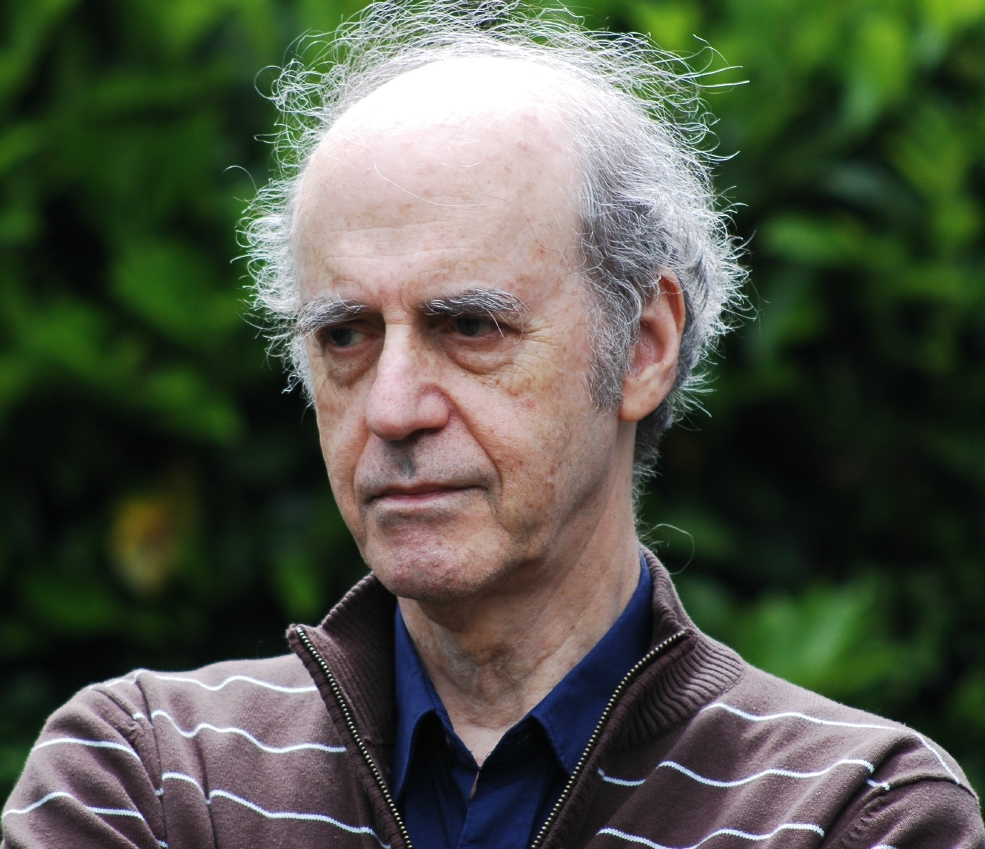

Last month, Professor Aaron Sloman was awarded the 2020 K. Jon Barwise Prize which recognizes “significant and sustained contributions to areas relevant to philosophy and computing by an APA member. The prize will serve to credit those within our profession for their life long efforts in this field.”

This is still a draft. Please see revision history/status

This blog post briefly

- describes some of the ways in which Aaron Sloman influenced me.

- points out some of his original contributions to cognitive science.

- shows some of the ways in which Sloman’s work is relevant to current discussions in cognitive science writ large.

I hope this will help some of the scientists and readers interested in the research problems discussed here to make use of Sloman’s work. I will try to do a better homage to Aaron in one or two chapters of my future book, Great Contemporaries in Cognitive Science and Related Endeavours.

First, about Aaron generally, paraphrasing the Wikipedia page on Aaron Sloman:

Aaron held the Chair in Artificial Intelligence and Cognitive Science at the School of Computer Science at the University of Birmingham (1991-2011), and before that a chair with the same title at the University of Sussex. Since “retiring” he is Honorary Professor of Artificial Intelligence and Cognitive Science at Birmingham. He has published widely on philosophy of mathematics, epistemology, cognitive science, and artificial intelligence; he also collaborated widely, e.g. with biologist Jackie Chappell on the evolution of intelligence.

I put “retiring” in scare quotes, above, because Aaron Sloman continues to contribute to AI with amazing cognitive productivity.

Aaron is one of the most important contributors to understanding the space of possible minds, following the Dartmouth conference (1956). While Aaron is considered a philosopher, his book, The Computer Revolution in Philosophy shows that the distinctions between empirical and formal sciences are not clear cut. Compare this Twitter thread in which I argue that science, philosophy and mathematics overlap. E.g., one cannot understand the actual without understanding the possible.

Aaron Sloman was my Ph.D. thesis supervisor and one of the greatest influences on my thinking. He is referenced many times on this blog, in my papers and books.

My road to Sloman

For several months while a Psychology undergrad in 1989, I shopped the world for the best programme in which to do a Ph.D. in Cognitive Science with the best thesis supervisor I could find. I got brochures from all the major psychology and COGS programmes offered in the Commonwealth. Sussex University and Aaron Sloman were at the top of my list. I knew that Aaron and I would be very compatible because Professor Claude Lamontagne had already told me a lot about him. I had already taken an entire self-directed reading course in which I researched causal reasoning, pitting associationism against more Kantian views, and favored a Kantian approach. And I soon discovered Sloman was a champion of Kant. So, in 1990 with Commonwealth and NSERC scholarships, I was off to Sussex to study with Aaron Sloman.

Lamontagne was a Ph.D. student in AI at University of Edinburgh 1972-1976. Aaron Sloman did a postdoc in AI there that year. Lamontagne had many and only extremely positive things to say about Sloman’s mind. (E.g., Lamontagne told me [and I later witnessed] that Sloman can attend a talk on any subject in cognitive science / AI defined very broadly, and provide the best summary and the most incisive question of anyone in attendance. Aaron’s responses are often worth the cost of attending even if the speaker’s talk is not great.)

So when I arrived at Sussex, I was able to get straight to work, without having to argue with my supervisor about how the mind should be studied.

In my 1994 Ph.D. thesis acknowledgements, I thanked Claude Lamontagne

for showing me the importance of Artificial Intelligence for psychology; for demonstrating to me the poverty of empiricism; and for making me aware of the British tradition in computational psychology.

For at Sussex there were also at the time luminaries like Maggie Boden, Andy Clark, and Phil Agree, and a cohort of very bright D.Phil students. (Related: Luiz Pessoa, an affective scientist who is mentioned below, had a D.Phil offer from Sussex for that same year, but chose an American university. Geoffrey Miller started a D.Phil at Sussex the following year.)

Some things I learned from Aaron Sloman

The above sets the context for me expressing a small subset of what I learned from Aaron, either explicitly or implicitly.

I had applied to study causal reasoning at Sussex. However, from Aaron I learned that, and how, it is possible to understand motivation and emotions in information processing terms; and how important it is to do so. So I joined his Cognition and Affect project at founding.

I also learned from Aaron Sloman that in theoretical AI, it is important to choose a programming language whose expressive power and ease is commensurate with the level of thinking one wants to achieve. (I had wanted to use Smalltalk because I knew the language and wanted to focus on theory not implementation. Aaron persuaded me to use Poplog Pop-11 which is better suited to AI. I never regretted choosing Pop-11.)

Aaron taught me, implicitly, how a great Cognitive Science program should be designed. He had co-founded the School of Cognitive and Computing Sciences (“COGS”) at Sussex with Maggie Boden, which when I was there as a D.Phil student in 1990-1991, was one of the very best, and perhaps largest, COGS graduate programmes in the world.

Aaron was recruited by the University of Birmingham, England, to become chair of its then struggling Computer Science programme, and co-chair (with the late Dr. Glyn Humphreys [my Ph.D. thesis co-supervisor]) of its new Cognitive Science programme. So, I left Sussex and followed Aaron to Birmingham. (This was despite some false warnings from some Sussex folks who claimed “you won’t like it there. It’s post-industrial you know”. I loved it!)

So, I then learnt about building a research programme and rebuilding a computer science department. Aaron helped recruit and attract great speakers, professors, and students. He always welcomed presentations from staff and students. The School of Computer Science at University of Birmingham went on to become the top ranked computer science program in all of the UK per Guardian ! At Aaron Sloman’s festschrift in 2011, the then chair commended Aaron at length for his energy and creativity in building their world class programme.

Communicating with leaders

I also learned from Aaron Sloman that it is sometimes possible to influence politicians and leaders of large organizations by writing to them. The British government in the 1980s, through the Alvey Programme (led by Brian Oakley), enacted some rules favoring the purchase of GEC Series 63 computers in AI research labs, which made it difficult for those researchers to get their hands on American computers (e.g., Sun workstations).

Sloman, who had a fellowship from GEC at the time, considered the GEC Series 63 computers to be suboptimal. He wrote to the Prime Minister, indicating that the Alvey policy was not in line with her own policy objectives. His letter made its way back to the Alvey programme, leading them to call him. Aaron suggested they give the GEC-63 developers a few months to prove they could make the computer usable, and if they failed, the project should be cancelled. Some time later, the GEC-63 project was abandoned as a failure.

The story, as it was summarized to me in the early 1990s, made an impression on me. In Feb 2010, after the iPad was announced and panned by the media, I wrote an email to Steve indicating that I was very impressed with the iPad and that I had some ideas about how Apple’s tech could even better support the needs of knowledge workers and students. Steve then requested a white paper from me, which I eagerly provided. I later learned that Steve himself, from a young age, would pick up the phone to call leaders with whom he wanted to meet (starting with Bill Hewlett).

Some of Aaron Sloman’s contributions to knowledge

It would be a research project of its own to list Aaron Sloman’s contributions to cognitive science (including AI and philosophy)! One can easily get lost in his sprawling web site, but it’s worth navigating! I regularly visit it to see what he’s been up to.

For an overview of Aaron Sloman’s work to 2011, I recommend Maggie Boden‘s encomium, “A Bright Tile in AI’s Mosaic” in From Animals to Robots and Back: Reflections on Hard Problems in the Study of Cognition – A Collection in Honour of Aaron Sloman | Jeremy L. Wyatt | Springer. In fact, the entire volume is a tribute to Aaron Sloman. I was delighted to contribute a chapter to that volume, which explicitly builds on Aaron’s work, transferring it to a new area “Developing expertise with objective knowledge: Motive generators and productive practice.” (PDF).

Rebooting AI from 1975 onwards

The excellent book Rebooting AI by Gary Marcus & Ernest Davis was published in 2019.

It is correct to say, however that Aaron Sloman has been trying to “reboot AI” since the mid 1970s. By this I mean that he has been drawing attention to and exploring important neglected problems in AI. That is not only “philosophy”, it’s AI.

Every engineer knows, or should know, that the most important part of engineering is figuring out what you should be trying to build. (I recommend The Reflective Practitioner by Donald Schön on this topic). So is it with science (and reverse engineering.)

Beyond Fregean representations and reasoning

Aaron Sloman’s 1962 D.Phil thesis — he was a Rhodes Scholar — on Knowing and Understanding demonstrated that analytic propositions may be empirically derivable. In other words, some statements truths can be shown to be true without being derived from formal truths. When reading his thesis a few years ago, I was struck that he reads like an AI thesis. Yet Sloman had not yet encountered AI — it had barely made it to the UK.

But when Sloman discovered AI, he noticed that many (perhaps most) of its researchers thought that reasoning could sufficiently be captured with Fregean representations (predicate calculus). His thesis research implied this could not be true. Geometrical reasoning cannot adequately be accounted for with formal logic or predicate calculus.

This led to the publication of several beautiful papers over decades. One of my favorites is Diagrams in the Mind? . It starts out with:

Consider the trick performed by Mr Bean (actually the actor Rowan Atkinson): removing his (stretchable) underpants without removing his trousers. Is that really possible? Think about it if you haven’t previously done so.

If you like that paper, do also read this Chapter 7 Intuition and Analogical Reasoning from The Computer Revolution in Philosophy. Your thinking about the mind may never be the same after that.

I noticed recently that Mateusz Hohol and Marcin Miłkowski are pursuing a related line of research. Their interesting recent paper Where does geometric cognition come from? cites Aaron’s work. I wish I had time to delve deeply into this area of research. These are hard and fascinating problems.

First and foremost in applying the concept of information processing to human and AI minds

To my knowledge (and I have spent hours researching this), Aaron Sloman is the first AI researcher to write systematically about the information processing architecture of human and artificial minds.

Not only was he first, I don’t think any other AI researcher has published more extensively on the subject, including Allan Newell and his group. This is not to take anything away from Newell’s important contributions, obviously. Sloman’s research team implemented architectures, but Newell’s group produced a software toolkit that has been used more extensively than the Birmingham one.

Incidentally, Paul Rosenbloom and I have discussed the history of information-processing architecture concepts (in person and in email). I think we are on the same page with respect to the history of the concept of architecture in AI. Paul notes that Herbert Simon was not enamored by the notion of information-processing architectures, despite his colleague, Newell’s, enthusiasm. That’s one of the many differences between Sloman and Simon’s nevertheless excellent 1967 “Motivational and emotional controls of cognition” paper, which pertains to perturbance, discussed below.

Developed the concept of motive processing

Aaron introduced the concept of motive processing. The mind is not merely a processor of dry factual information, it also has motive generators, motive generator generators, and various mechanisms that deal with motivators.

Emotion as perturbance in motive processing agents

Sloman developed a very original framework for understanding “emotions” in humans and artificial minds, first published with Monika Croucher

- Sloman, A., & Croucher, M. (1981a). Why robots will have emotions. In Proceedings IJCAI 1981, Vancouver. (PDF)

- Sloman, A., & Croucher, M. (1981b). You don’t need a soft skin to have a warm heart: Towards a computational analysis of motives and emotions.

Aside. Aaron and I in 1992 started calling these states perturbances. When I left the project, he then labeled them ‘tertiary emotions’. But ’emotion’ is such a polysemous term that I prefer the technical term ‘perturbance’. That way people are less likely to falsely assume they know what is being discussed, and might even bother to “accommodate” [Piagetian sense] to the new concept. (“perturbant mental states”, “perturbances”, “perturbant information processing”, “perturbant emotions”, and are acceptable expressions). See our AISB 2017 paper.

The core idea is that the minds of agents that meet the specified requirements of autonomous agency will necessarily be subject to perturbance. Perturbance is an emergent mental state in which motivators tend to disrupt or otherwise influence mental processing, even if the agent (via its reflective / “meta-management” mechanisms) attempts to suppress these motivators. Unlike the related concept of repetitive thought (e.g., per Watkins’s well known 2008 paper), perturbance is not an empirical concept, it is an integrative design-oriented concept. It is a dispositional concept whose interpretation requires a functional architecture, involving mechanisms such as motivator generators and activators, insistence filtering, etc. Importantly, perturbances are emergent rather than ‘designed in’ or intrinsically functional. (A slightly later theory that makes a similar assumption is Klaus Scherer’s. Another example of two research programs running in parallel without citing each other. I have been trying to bring the theories together.)

The concept of perturbance overlaps in many way with Herbert Simon’s concept of emotion described above. But there are also many important differences. As mentioned above, perturbance is an architecture-based concept whereas Simon’s emotion concept isn’t. Perturbance rests on the notion that motivators differ in insistence, another concept lacking from Simon’s theory. Other differences between the concept of perturbance and Simon’s theory of emotion are discussed in an upcoming paper on perturbance.

Agnes Moors recently has developed an argument that makes similar fundamental claims as Sloman’s perturbance theory. See for example her 2017 papers:

Moors, A. (2017). Integration of two skeptical emotion theories: Dimensional appraisal theory and Russell’s psychological construction theory. Psychological Inquiry, 28(1), 1–19. doi :10.1080/1047840X.2017.1235900

I.e., Moors proposes that emotion is to be understood as involving a two-level architecture (Sloman and I assume at least three), predominantly biased towards purposive action (similar to Sloman). Like Sloman, she does not assume that the explanandum is emotion or even emotional episodes. Her emotion research programme focuses on behavior and experience. Sloman and I however, in taking an integrative design-oriented approach (which includes the designer stance — also advocated by the late founder of AI John McCarthy in his 2008 paper, “the well designed child”), focus not on predicting behavior but understanding competence.

Moors does not cite Sloman, unfortunately. Sylwia Hyniewska, Monika Pudlo and I have submitted a paper that discusses some of the overlap. We are (part time) taking off where Sloman’s Cognition and Affect project left off, and trying to connect more with theoretical psychology. (As may be obvious from the CogSci Apps invention, Hook, I’m into building bridges.😊)

This section shows that Sloman’s work on affect is historically original and still relevant.

Against sharp discontinuities between cognition and emotion

The idea that there is not a sharp discontinuity between cognition and affect is gaining traction. Luiz Pessoa’s 2013 book , The Cognitive Emotional Brain, is largely about that.

However, Aaron Sloman is the first person in cognitive science to make this point systematically.

I tweeted to Luiz Pessoa (for whose work I have immense respect) asking why his book hadn’t recognized the precedent. I was trying to help the research community connect mutually relevant research programmes and alluding to why it is sometimes difficult, per this Twitter thread. Too often in “cognitive” science (including affective science) research projects that are relevant to each other are not taken into consideration. We are failing to benefit from each other’s work as much as we should. (We can all use help here).

While we are on the subject, in my phd thesis on Goal processing in autonomous agents, there is an entire section on “Valenced knowledge and conation”. (the expression “valence” appears multiple times).

As noted elsewhere on this website, CogZest’s name is a nod to the blending of cognition and affect. (Not merely interaction, but blending). It’s also about enthusiasm for rich mental life. My first book, Cognitive Productivity: Using Knowledge to Become Profoundly Effective, also assumes this blending, and that a major purpose of so-called ‘cognition’ is to develop motivational machinery, machinery that is also largely cognitive! (The mind is full of strange loops, as Douglas Hofstadter calls them).

This quote of Roy Baumeister 2017 is aligned with Sloman’s contributions:

I hope that moving toward a general theory of motivation will help psychology as a whole acknowledge and embrace the fundamental importance of motivation in the grand scheme of integrative psychological theory. (Baumeister, 2015, p. 9)

In fact, Pudlo, Hyniewska and I used that as the opening quotation of our upcoming paper on perturbance and the integrative design-oriented approach.

Against simplistic dichotomies and continua-assumptions more generally

Sloman’s arguments against cognition-emotion dichotomies have a more general basis in his longstanding arguments against simplistic dichotomies, e.g., between things with minds and things without, cognition and emotion, etc.

It is typically assumed that the appropriate alternative to false dichotomies is “shades of grey”, i.e., to assume underlying continua. That is now particularly attractive to the quantitatively mathematically inclined, who seem to have taken over cognitive science, including AI. Pessoa’s The Cognitive Emotional Brain is of the quantitative ilk. However, whereas , of course, Sloman does not reject quantitative theoretical methods, he did discover an alternative, namely that realms often admit of several sharp discontinuities.

For instance, Sloman argues that there is a space of possible minds, with many sharp discontinuities, to be explored. See for instance his 1984 paper, The structure of the space of possible minds. He followed up countless times on this theme. I note that a related project has emerged @ Edge.org, Mining the Computational Universe | Edge.org. I don’t know if they have cited Aaron Sloman’s seminal work on the subject.

Incidentally, dear reader,

Discontinuities: Love, Art, Mind is the title of a (still only ‘upcoming’) book of which I am editor (and an author). Only the final chapter deals directly with discontinuities.

Developed the concept of architecture-based motivation

Aaron also developed an essential (integrative design-oriented) concept for understanding the motivation of deeply autonomous agents, including humans. Architecture-based motives are generated relatively directly by reactive motive generating / activating mechanisms rather than via means ends analysis or other deliberative forms of planning.

Aaron had developed the concept years earlier, but the first paper in which he used the expression “architecture-based motivation”, I think, is in his 2002 “Architecture-based conceptions of mind” in Synthese Library, 403–430. This PDF. He has frequently updated that paper online (2008-2020): Architecture-based motivation.

As Aaron argues, architecture-based motivation is partly a response to hedonism. I’ve elaborated on that angle in Psychological Hedonism Meets Value Pluralism: An Integrative Design-oriented Perspective. I’ve also developed the idea in Cognitive Productivity: Using Knowledge to Become Profoundly Effective.

Other work and problems that need the concept of architecture-based motivation

Aaron is not the only one who challenges hedonism. But many motivation authors have not yet caught on to the idea of architecture-based motivation, presumably because they not yet encountered it or the integrative design-oriented approach. (Too many silos, not enough bridge building in psychology).

Higgins: beyond pleasure and pain

For example, Tory Higgins also embraces value plularism (vs. hedonism).

Higgins, E. T. (1997). Beyond pleasure and pain. American Psychologist.

Higgins, E. T. (2011). Beyond Pleasure and Pain:How Motivation Works. OUP USA.

However, Higgins’ theory does not point to asynchronous motivators, let alone the more general concept of architecture-based motivation.

Social and sexual signaling (Simler & Hanson and Miller)

Similarly, consider the book by Kevin Simler and Robin Hanson

The Elephant in the Brain: Hidden Motives in Everyday Life which points to social signaling as an important form of motivation. I am sure that social signaling (including signaling of cognitive capacities as outlined by Geoffrey Miller in The Mating Mind) is a very important source of motivation.

However, it does not suffice to claim that these ‘motives’ are unconscious. Often times behavior that can explained, from the intentional stance (as Daniel Dennett calls it), as ‘motivated by social signaling’ has a deeper explanation from the designer stance in terms of architecture-based motivation. For the agent may have compiled a large collection of motive generators to act in certain ways that are likely to send useful social signals, each generator tailored to circumstances. The reactive motives themselves however may be disconnected (in the agent’s mind) from any explicit motivator to impress. Some of these motive generators were perhaps originally compiled with explicit knowledge of their social signaling usefulness. But they might just have been installed through evolution. Either way, the motives they generate are not to impress, but to do something — and that something may lead to impressing. There might not be no immediate causal link, conscious or unconscious, between a motive to impress and the behavior.

Effectance (Robert White)

In Cognitive Productivity and elsewhere I recast Robert White’s concept of effectance, and intrinsic motivation, as architecture-based motivation (more generally meta-effectiveness). We do not need to invoke the concept of pleasure to explain play. Children are simply designed to want to do certain things which we call play.

Inside Jokes (Mirth) (M. Hurley, Dan Dennett, & R. Adams, 2011)

I have also argued that the very helpful theory of humor presented in Inside Jokes can be improved with the concept of architecture-based motivation. That is because a deep problem with that otherwise excellent theory is the assumption that humor is motivated by the pleasure of mirth. Sure, mirth is pleasant. But it is not parsimonious to assume that the brain is learning to do humor for mirth.

Joke-telling and -getting happen too fast for computations of pleasure to be central to them. This is not to say that pleasure plays no role.

Gilbert Ryle on pleasure

In Born Standing Up: A Comic’s Life by Steve Martin the acclaimed humorist says:

Enjoyment while performing was rare—enjoyment would have been an indulgent loss of focus that, that comedy cannot afford.

Steve Martin’s words are consistent with what Gilbert Ryle said about pleasure in Dilemmas in 1953 and The Concept of Mind in 1956 :

Doubtless the absorbed golfer experiences numerous flutters and glows of rapture, excitement and self-approbation in the course of his game. But when asked whether or not he had enjoyed the periods of the game between the occurrences of such feelings, he would obviously reply that he had, for he had enjoyed the whole game. He would at no moment of it have welcomed an interruption; he was never inclined to turn his thoughts or conversation from the circumstances of the game to other matters. He did not have to try to concentrate on the game. (1956, p. 108)

In fact, the concept of architecture-based motivation builds on such ideas from Gilbert Ryle and others (e.g., Alan R. White).

Solidly refuted Roger Penrose’s quantum theory of consciousness

Sloman published a very deep and illuminating criticism of Roger Penrose’s The Emperor’s New Mind :

“The Emperor’s Real Mind”, Artificial Intelligence, 56, (1992), pp 355-396. (PDF)

Spectacular argument, worth reading. (Aaron and I attended Penrose’s lecture on his book at COGS Sussex in 1990 or 1991, before Sloman published his paper on the book.)

Visual and analogical capabilities and reasoning

From the 1960’s onwards, Aaron’s published many ground breaking papers on visual and analogical reasoning that can keep AI researchers busy for decades. Many of them reveal weaknesses in both Fregean representations and connectionist models.

Virtual machine functionalism

Aaron was one of the first, or the first, to recognize that the human mind (and many possible minds) involve a collection of virtual machines — AKA virtual machine functionalism..

This is a very grand idea whose relevance has not been properly recognized. In particular, it offers an alternative to stale reductionist conceptions and debates, mind body dualism, etc. It points the way, instead, to a progressive research programme (cf. Lakatos).

See also his paper What cognitive scientists need to know about virtual machines.

Here, I have published Understanding Ourselves with Virtual Machine Concepts – CogZest about Sloman’s concept. It also shows up in my Cognitive Productivity: Using Knowledge to Become Profoundly Effective book.

How to study minds

Aaron has developed a framework for understanding mind and doing theoretical AI that is relevant to the Marr and Poggio’s “levels of understanding” framework (including as recently revised by Poggio). Compare Aaron’s paper “Prospects for AI as the general science of intelligence.”. The Birmingham Cognition and Affect Project took several steps in this grand programme, but the journey is a long and ambitious one!

While we’re on the subject of the oft neglected topic of theoretical methods for understanding mind, I should mention some excellent related work being done by Iris van Rooij and colleagues. See for instance van Rooij & Baggio (in press) Theory before the test: How to build high-verisimilitude explanatory theories in psychological science.

Conceptual analysis

Sloman has also systematically discussed the importance of conceptual analysis in AI.

As I argued in Cognitive Productivity: Using Knowledge to Become Profoundly Effective, few in psychology recognize the importance of conceptual analysis. Students are not taught conceptual analysis as part of their research methods. The otherwise excellent book by Keith Stanovich, How to think straight about psychology, has a strange argument against semantic exploration and analysis. Many seem not to haven notice that the theory of relativity is based on conceptual analysis!

However, there are exceptions. Some are mentioned in my Cognitive Productivity book. There’s also more recently this important paper by Agnes Moors & Jan De Houwer, which incidentally has implications for theories of perturbance, including ours.

Sloman does not claim that conceptual analysis alone can lead to full of understanding of the mind. However, failing adequately to understand concepts one is implicitly using, or ought to be using, is a recipe for confusion and theoretical stagnation. Moreover even the apt choice of words for a helpful concept is important and can be aided by conceptual analysis. (I have been collecting instances of what one might think is “terminological obfuscation” in psychology —to be published later).

Apart from his tips on doing conceptual analysis, one of Sloman’s on conceptual analysis that I highly recommend to anyone interested in mind is

Situated and embodied cognition

I’ve never seen this acknowledged in the literature. However, I suspect that Sloman is one of the major original influences that led to the situated / embodied cognition “movements”. This is clear to me from his requirements for autonomous agency from 1970s onwards, including in his 1978 book, and about vision in particular, which led to this major paper:

On designing a visual system (Sloman, 1989)

It is perhaps no coincidence that Andy Clark was at Sussex in late 1980s to mid 1990s. I.e., many of Sloman’s papers could be read as call for considering the situation and body of agents in the requirements of autonomous agency. (Sloman explicitly made connections with J.J. Gibsons from the 1970s onwards. [I know some of the history via Claude Lamontagne, whose 1976 phd in AI at Edingburgh on vision discussed Gibson’s ideas, though not in as complementary terms as Aaron did.]) If a reader knows Andy, they can ask him what influence Aaron Sloman had in his development of embodied cognition; please let us know in the comments below.

Despite the connection, Sloman and I have both been critical of those movements for throwing the baby out with the bathwater. Andy Clark himself saw beyond the narrow bounds of that movement, being perhaps a bit surprised by some of his followers critical response to his Behavior & Brain Sciences paper on prediction.

After his festschrift: the evolution of life and minds

After his 2011 festschrift, and thus after Boden’s paper, Aaron has continued to make great progress in many areas. He has been devoting most of his energy to his highly ambitious meta-morphogenesis project and meta-configured genome project :

Was Alan Turing thinking about this before he died, only two

years after publishing his 1952 paper on Morphogenesis?

Here Aaron has used everything he’s learned in his career to resume and reformulate projects from his DPhil, pre-AI, and early AI days, regarding mathematics and analogical reasoning (which are alluded to below).

Some key questions

What makes various evolutionary or developmental trajectories possible?

What sorts of information processing mechanism (discrete? continuously variable? deterministic? stochastic?) allowed brains to acquire and manipulate spatial (topological/geometric) information in ways that eventually led to the discoveries of ancient mathematicians, including axioms, constructions and theorems in Euclid’s Elements?

A key concept: Construction kits for evolving life (Including evolving minds and mathematical abilities.).

Some other recognitions

See other recognitions on the Wikipedia page about Aaron Sloman.

A few links and references

- Description of the K. Jon Barwise Prize – The American Philosophical Association

- Jon Barwise – Wikipedia

- Aaron Sloman’s homepage.

- My blog post on virtual machines for cognitive science.

more links should be added here.

Conclusion

A productive response to the concept of ‘Sleeping beauty’ papers, which slumber for decades is for scientists to try to shorten the time between important ideas being published and them being noticed by researchers to whom they are relevant.

It seems apposite to me that for the late stage of his career Aaron Sloman has been following up on Turing’s last paper, on morphogenesis, which itself was a sleeping beauty. (Compare Forging patterns and making waves from biology to geology: a commentary on Turing (1952) ‘The chemical basis of morphogenesis’ | Philosophical Transactions of the Royal Society B: Biological Sciences)

I think Aaron’s work could and should be even more widely recognized than it is. Hopefully the K. Jon Barwise APA Prize to Aaron will speed things up a bit.

Footnotes

- perturbance: We have a paper in review on on the concept of perturbance that traces some of its history: Beaudoin, Pudlo & Hyniewska (accepted subject to revisions). “Mental perturbance: An integrative design-oriented concept for understanding repetitive thought, emotions and related phenomena involving a loss of control of executive functions”. See also our 2017 paper: http://summit.sfu.ca/item/16776. They both delve into the history of Aaron’s concept of perturbance and project it, a bit altered, into the future.

Revision history/ status

As of 2020-06-18, this is still a very rough draft. May contain errors and significant omissions.

- 2020-06-18 11:48 AM PT. Added DRAFT label. Aaron hasn’t fully read this yet, but he spotted errors, particularly with respect to the Maggy Thatcher section. So I commented out that text. I will revise the text after hearing back from Aaron.

- 2020-06-27 3:21 PM PT. Reintroduced the Thatcher section with more details, following an email exchange with Aaron Sloman.

Thanks for this appreciation of some of Aaron’s works. One should mention his paper about “Varieties of Evolved Forms of Consciousness” which was recently published in a special issue related to the 2019 conference “Models of Consciousness” in Oxford, UK (Aaron was one of the speakers.)

https://doi.org/10.3390/e22060615